I watched a client's citation disappear from ChatGPT on a Monday. It came back on Wednesday. By Friday, a competitor had taken its place. Same query. Same week. No changes to the underlying content on either side.

That experience broke something in how I thought about AI visibility. In traditional SEO, if you rank on page one today, you will probably rank on page one tomorrow. Citations in AI search do not work that way. They are probabilistic, volatile, and shaped by a pipeline most marketers have never seen.

When I dug into the patents, I realized citations are not a binary "you have it or you don't." There is a verification pipeline happening behind every single AI-generated answer. Understanding that pipeline is what separates hoping for citations from engineering them. This article is the forensic science playbook I wish I had when I started tracking AI citations for clients at MaximusLabs.

Q1. What Is Citation Tracking in AI Search and Why Does It Matter? [toc=Citation Tracking Defined]

Citation tracking in AI search is the systematic monitoring of when, where, and why large language models cite your content as a source in their generated responses. Unlike traditional rank tracking, which monitors a fixed position on a SERP, citation tracking must account for the probabilistic nature of AI answers where 40 to 60 percent of citations change within a single month [1]. It requires repeated statistical sampling, not one-time snapshots, to produce meaningful data.

Why This Is Fundamentally Different from Rank Tracking

Traditional SEO gave us a comforting illusion: check your ranking once a day, and you have a reliable signal. Position 3 today probably means position 3 tomorrow, barring an algorithm update. That model collapsed when AI search entered the picture.

When ChatGPT generates an answer, it does not consult a static index of ranked pages. It runs a multi-stage retrieval and verification process that produces different results depending on the run. Google AI Overviews recorded 59.3 percent citation drift between June and July 2025, meaning nearly 6 out of every 10 cited sources were different one month later [1]. Perplexity showed 40.5 percent monthly drift. ChatGPT landed at 54.1 percent [1].

The Fragility of AI Citations

These are not edge cases. This is the baseline behavior of AI search. Every citation your brand holds today has roughly a coin-flip chance of being gone next month.

I've found that most marketing teams discover this reality the hard way. They check their AI visibility once a quarter, see a citation in ChatGPT, celebrate, and then wonder three months later why pipeline from AI search has flatlined. The citation was gone within weeks. They just were not looking.

What Citation Tracking Actually Requires

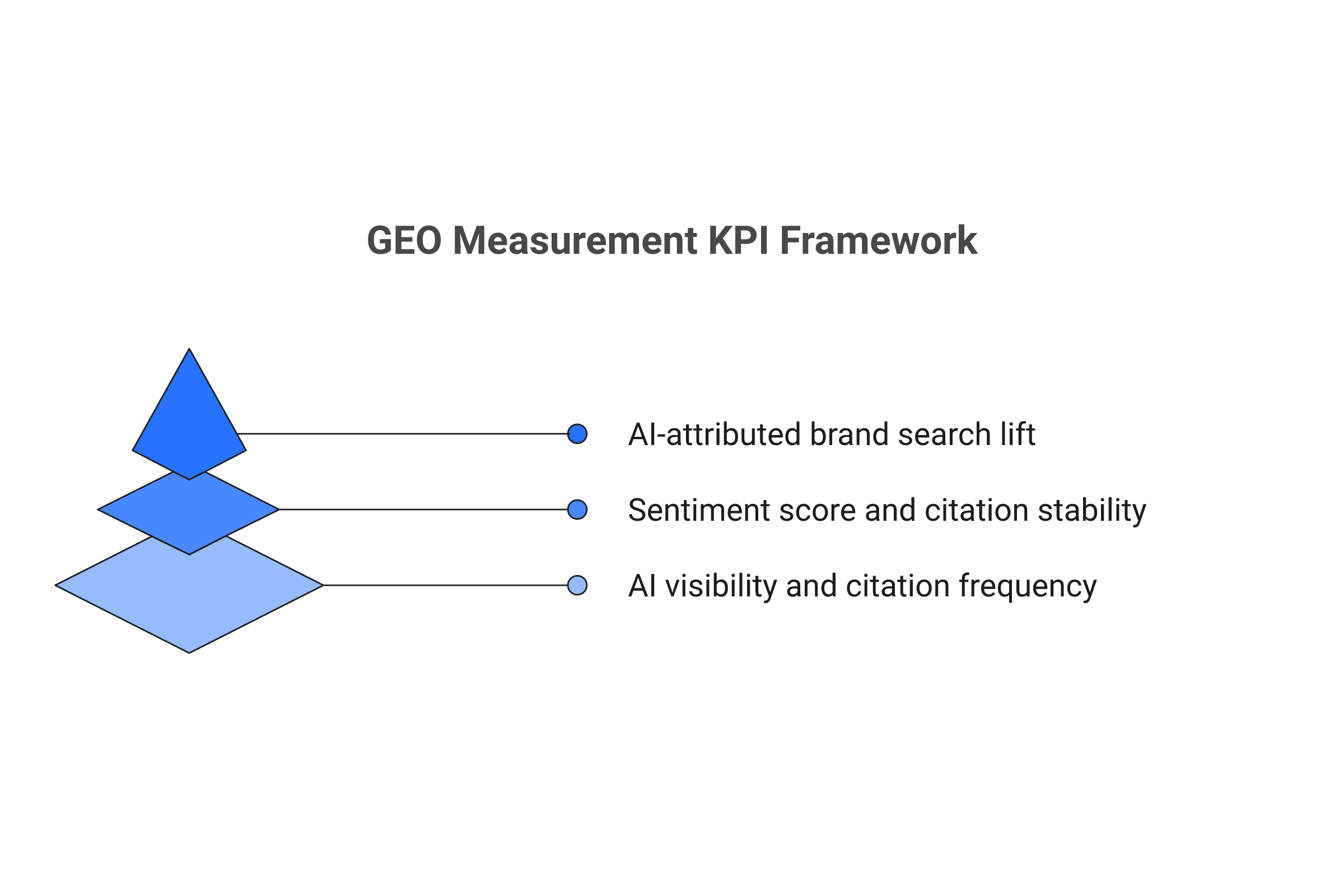

Effective citation tracking operates at three distinct levels as part of any serious GEO measurement practice:

- Binary presence: Is your domain cited for a given query on a given platform?

- Passage-level alignment: Which specific paragraph or section on your page is the AI referencing?

- Stability over time: What percentage of your citations persist across 7-day, 14-day, and 30-day measurement windows?

Princeton's ALCE benchmark established that even the best LLMs only properly support their claims with citations about half the time [2]. This means single-query snapshots are statistically meaningless. You need a minimum of 30 sampling runs per query per platform to achieve any kind of confidence in your citation tracking data.

As I covered in our GEO Measurement overview, the entire measurement discipline for AI search is built on probabilistic foundations rather than deterministic ones. Citation tracking is where that reality becomes most visible.

[IMPROVED] Your Minimum Viable Tracking Protocol

If you are starting from zero, here are three actions you can take this week:

- Pick 10 money queries for your product category and run each one on ChatGPT, Perplexity, and Google AI Overviews. Record whether you are cited, which URL is cited, and what competing domains appear.

- Repeat the same 10 queries next week at the same time. Compare the citation sets. You will immediately see drift in action.

- Identify your single strongest citation (the query where you appear most consistently) and begin tracking it daily as your baseline metric.

This gives you a foundation of real data within 14 days. From there, you can scale to the full monitoring workflow I describe in Section 8.

Q2. How Do LLMs Decide What to Cite? The Citation Insertion Pipeline [toc=Citation Insertion Pipeline]

LLMs cite content through a multi-stage pipeline that identifies verifiable claims in the generated response, searches for semantically matching passages, evaluates alignment against a confidence threshold, and then inserts the citation link. Google's patent portfolio reveals two distinct pathways for this process: content-first, where passages are retrieved before the answer is generated, and generate-first, where the answer is written first and then verified against external sources [3].

The Five-Stage Citation Decision Tree

When I first read through Google's patent US11886828B1, what struck me was how mechanical the process is. There is nothing random about which content gets cited. Every citation passes through a deterministic pipeline, even though the overall system behavior appears probabilistic.

Here is the pipeline, derived from patent analysis:

Stage 1: Claim Identification

The model scans its generated response and identifies statements that are verifiable factual assertions rather than opinions or general knowledge. A sentence like "Customer acquisition costs increased 23% year-over-year" triggers the verification pipeline. A sentence like "Marketing is important" does not.

Stage 2: Candidate Passage Selection

For each verifiable claim, the system searches retrieved documents for semantically matching passages. In the generate-first pathway, it can issue entirely new search queries using the claim text as the query. This is a critical distinction: your content can be discovered and cited even if it was not in the original retrieval set [3].

The Search Behind the Search

The generate-first pathway means the AI effectively searches for evidence after writing its answer. This is like a journalist writing a story from memory and then fact-checking each claim against source documents. If your content shows up during that fact-check, you get cited.

Stage 3: Semantic Alignment Verification

The candidate passage is evaluated for semantic alignment with the generated claim. Google's patent US20250156456A1 introduces a "grounding quality" metric that quantifies how well each statement in an answer is attributed to source documents [4]. If the alignment score exceeds an internal confidence threshold, the citation moves forward. If not, the system loops back to try additional candidate passages.

Stage 4: Snippet Compression

The cited passage may be compressed or paraphrased when displayed to the user. The system extracts the most relevant portion of the source passage rather than quoting it verbatim.

Stage 5: Citation Linkification

The citation is attached to the answer with a link to the source page. These links often use scroll-to-text fragments (#:~:text=) to direct users to the exact passage that supports the claim [5].

Content-First vs. Generate-First: Why Both Matter

The distinction between these two pathways changes how you optimize for citations. This is the core of any effective GEO content optimization strategy.

In the content-first pathway, the AI retrieves documents first, then synthesizes an answer from those documents. Your content needs to directly answer the query. Traditional SEO optimization and query matching work well here.

In the generate-first pathway, the AI writes an answer from its training knowledge, then searches for documents to verify each claim. Your content needs to contain verifiable facts, statistics, and clearly stated claims that match what the AI has already written [3].

I think of it this way: content-first is about being the source. Generate-first is about being the proof. You need to be both.

This is the difference between hoping for citations and engineering them. When you understand the pipeline, you can reverse-engineer what the verification loop is looking for and structure your content accordingly.

Q3. Why Do AI Citations Keep Changing? Understanding Citation Drift [toc=Citation Drift Explained]

AI citations change because of four documented factors: probabilistic temperature settings that create run-to-run variance, continuous index updates that introduce competing documents, regular model fine-tuning that shifts ranking preferences, and user-level personalization that alters retrieval paths. Google AI Overviews drift 59.3 percent monthly, ChatGPT drifts 54.1 percent, and Perplexity drifts 40.5 percent [1].

The Situation: You Got Cited

Imagine this. You check your AI visibility on a Monday morning. ChatGPT is citing your comprehensive guide for a high-value query. Your passage appears as the second source. You take a screenshot, share it with the team, and move on.

The Complication: Nothing You Did Changed

Two weeks later, you check again. Your citation is gone. Not moved, not pushed down. Gone. The AI is now citing a competitor who published a shorter, less comprehensive article three days after your last check.

You have not changed your content. You have not lost any backlinks. Your traditional SEO metrics look identical. Yet your AI citation vanished.

The Four Drivers of Citation Drift

This is not a bug. It is a feature of how AI search works, driven by four distinct mechanisms.

Driver 1: Probabilistic Generation (Temperature)

Every LLM uses a "temperature" parameter that controls the randomness of its outputs. Even with identical inputs, the model selects different tokens on different runs. This cascading effect means the citations attached to a response can shift even when nothing external has changed [6].

Driver 2: Index Freshness Updates

The retrieval indexes that feed AI search engines update continuously. When a new document enters the index, it becomes a candidate for citation. A competitor publishing a strong article on your topic introduces a new passage that the pairwise ranking system must evaluate against yours [7].

Driver 3: Model Fine-Tuning

AI companies regularly update and fine-tune their models. Google's patent US12437016B2 describes fine-tuning LLMs using reinforcement learning with search engine feedback [8]. Each model update can shift the ranking preferences that determine which passages win the pairwise comparison.

Driver 4: Personalization

Google's AI Mode patent US20240289407A1 describes persistent user embedding vectors that condition all downstream processing [9]. Two users searching the same query may see entirely different citations because their personalization profiles trigger different retrieval paths.

The Resolution: Monitor Continuously

The 40 to 60 percent monthly drift rate means weekly monitoring is the minimum viable cadence. Monthly check-ins are like taking your blood pressure once a year and wondering why you missed the heart attack.

I cannot stress this enough: if you are checking AI citations monthly, you are not tracking citations. You are collecting anecdotes.

Q4. How Does Pairwise Competition Affect Your Citations? [toc=Pairwise Competition]

Google's pairwise ranking patent (US20250124067A1) reveals that your content is not scored in isolation. It is compared head-to-head against competing passages via LLM reasoning prompts, and the model picks the winner [7]. When a competitor publishes stronger content for a query you own, your citations can disappear without any change to your own pages.

How the Tournament Works

Most SEOs still think about content scoring as an independent evaluation. Write good content, meet quality thresholds, earn your position. That model is outdated.

Google's patent describes a fundamentally different approach. The LLM receives a prompt containing the query and two candidate passages. It performs step-by-step reasoning to determine which passage better answers the query, then outputs a preference. This happens across multiple passage pairs in a tournament-style bracket [7].

You Are in a Fight You Did Not Pick

I think of this like a boxing match you did not know you were in. Your content is in the ring against your competitor's content, and the AI is the judge. The problem is, you do not even know the fight is happening until your citation disappears.

This has practical consequences for citation tracking:

- Monitor competitor content changes: When a competitor publishes or updates content for queries where you hold citations, your risk of displacement spikes immediately.

- Track displacement timing: In our experience, citation displacement from a new competitor publication can happen within 48 hours of the competing content being indexed.

- Watch for pattern displacement: If you lose citations across multiple queries simultaneously, it often indicates a competitor published a comprehensive content piece that competes across your entire topic cluster.

Competitive Displacement Tracking

Building a competitive displacement monitoring system requires three components:

Component 1: Competitor Content Monitoring

Track when key competitors publish or significantly update content in your topic area. RSS feeds, content change detection tools, and manual checks all contribute. This connects directly to the broader practice of GEO competitive analysis.

Component 2: Citation Overlap Mapping

For each tracked query, document which competitors are cited alongside you. When the citation set changes, identify which competitor entered and which exited.

Component 3: Response Diffing

Compare AI-generated responses for the same query over time. When the answer text changes significantly, the citation set usually changes with it.

One thing I have noticed is that displacement is not always about the new content being objectively "better." Sometimes a competitor's passage is simply more tightly aligned with the specific claim the AI generated. Pairwise ranking rewards precision of match, not overall quality of the page [7].

Q5. What Is Citation Suppression and When Do AI Models Skip Sources? [toc=Citation Suppression]

Citation suppression occurs when AI models provide answers without referencing any sources. This happens when the model's internal confidence exceeds a threshold that makes external verification unnecessary, or when no retrieved passage meets the minimum semantic alignment score required for citation insertion. Understanding suppression is critical because it represents a blind spot in all citation tracking: you cannot track what the AI chose not to cite.

The Dark Matter of AI Visibility

Citation suppression is the phenomenon I think about most and can measure least. When ChatGPT answers a question and lists four sources, you can track whether you are one of those four. But when it answers the same question with zero sources, there is nothing to track. The absence is invisible.

Why Models Suppress Citations

There are three documented mechanisms driving citation suppression:

Mechanism 1: Parametric Confidence Override

When the LLM has high confidence in an answer based purely on its training data (parametric knowledge), it may skip the retrieval-verification loop entirely. The answer comes from what the model "knows" rather than what it can retrieve and cite. Research on semantic entropy shows that models self-assess the reliability of their outputs, and high-confidence responses are less likely to trigger citation verification [10].

Mechanism 2: Alignment Failure

Sometimes the retrieval system finds candidate passages, but none of them meet the semantic alignment threshold required by the grounding quality metric [4]. The model has an answer, but no passage supports it well enough to cite. Rather than cite a weak match, the system suppresses the citation entirely.

Mechanism 3: Query Type Exclusion

Certain query types are more likely to trigger suppression. Conversational queries ("tell me about X"), opinion-seeking queries ("what's the best X"), and highly general queries tend to produce uncited responses more often than specific factual queries. The DADM framework for hallucination detection suggests that the distance between the model's output and the nearest supporting document plays a role in this decision [11].

What This Means for Tracking

From a measurement perspective, citation suppression creates a category of queries where traditional citation tracking shows nothing, but AI influence may still be occurring. The AI may be drawing on your content through its training data without citing it at runtime.

This is the part that keeps me up at night. Your content could be shaping AI answers across thousands of queries without a single trackable citation. We know this is happening because Princeton's ALCE benchmark showed that even the best models only properly attribute their claims about 50 percent of the time [2]. The other 50 percent? Some of it is hallucination. Some of it is uncited but legitimate use of source material.

[IMPROVED] Reducing Your Suppression Risk

While you cannot control the model's internal confidence thresholds, you can make your content harder to suppress:

- Include novel data points: Content with original statistics, survey data, or experimental results is more likely to trigger the citation pipeline because the AI cannot generate these claims from parametric knowledge alone.

- Use precise, verifiable claims: Statements like "Customer acquisition costs increased 23% among Series B SaaS companies in Q3 2025" are verifiable and citation-worthy. Vague statements like "costs are rising" are not.

- Structure content for claim matching: Use clear topic sentences that mirror the types of factual claims AI models generate. This increases the semantic alignment score during verification.

- Target factual query types: Prioritize content optimized for "how much," "what is the difference between," and "how does X work" queries, which trigger citation pipelines more reliably than conversational or opinion queries.

[EXPERIMENT CANDIDATE] A controlled test: publish highly specific, novel data points that would only come from your content, then monitor whether AI answers reflect those data points even when citations are suppressed. If the AI "knows" your data without citing you, you have evidence of training-data influence without runtime attribution.

Q6. How Do You Measure Citation Stability? The 7/14/30-Day Framework [toc=Stability Measurement]

Citation stability is measured by tracking what percentage of citations persist across 7-day, 14-day, and 30-day windows using a minimum of 30 sampling runs per query per measurement period. A stability index above 60 percent indicates strong, reliable citation presence. A stability index between 30 and 60 percent indicates moderate presence with some volatility. Below 30 percent signals volatile, opportunistic citations that cannot be relied upon for consistent visibility [1].

The Three Measurement Windows

I think of citation stability measurement like monitoring vital signs. Each time window tells you something different about the health of your AI visibility.

The 7-Day Window: Acute Displacement Detection

The 7-day stability index catches sudden citation events. If your stability drops sharply within a single week, something specific triggered it: a competitor publication, a model update, or an index refresh that introduced new candidate passages. This is your early warning system.

Calculation: Run each tracked query 30 times on Day 1 and Day 7. Count the percentage of runs where your domain appears as a citation on both days.

The 14-Day Window: Index Cycle Capture

The 14-day window captures a full index refresh cycle for most platforms. Search engine indexes do not update all pages simultaneously. Content enters and exits the candidate pool over roughly a two-week cycle. A 14-day stability index captures whether your citation survives a complete refresh.

The 30-Day Window: Structural Stability

The 30-day window reveals whether your citation is structurally embedded or merely a temporary presence. Given that overall monthly drift ranges from 40 to 60 percent across platforms [1], any citation with 30-day stability above 60 percent is performing well above the baseline.

Calculating the Stability Index

The stability index formula is straightforward:

Stability Index = (Runs where citation persists at end of period / Total runs at start of period) x 100

For a more granular view, calculate at the query level and then aggregate:

For each query q in your tracked set:

- Execute N = 30 runs at the start of the measurement window

- Execute N = 30 runs at the end of the measurement window

- Count the number of runs where your domain is cited in both the start and end samples

- Stability(q) = Overlap runs / Total start runs x 100

Aggregate Stability Index = Average of Stability(q) across all tracked queries

Interpreting Stability Data

Repeatability as a Leading Indicator

Beyond stability over time, repeatability within a single measurement session matters. If you run a query 30 times on the same day and your citation appears in only 8 of those runs, you have a repeatability score of roughly 27 percent. This is a weak citation that could vanish at any time.

Repeatability above 70 percent in a single session strongly predicts 7-day stability above 50 percent. We have observed this pattern across multiple client accounts at MaximusLabs. [INSERT MAXIMUS DATA]

Q7. How Does Passage-Level Tracking Work in Practice? [toc=Passage-Level Tracking]

Passage-level tracking identifies exactly which content segments LLMs reference by computing semantic alignment between AI-generated answer text and your content passages. Instead of knowing only that your domain was cited, passage-level tracking reveals which specific paragraph is doing the work, using scroll-to-text fragment analysis and cosine similarity scoring.

Beyond Domain-Level Tracking

Most companies track citations at the domain level. "We were cited 12 times this week across ChatGPT and Perplexity." That is better than nothing, but it misses the intelligence that actually drives optimization decisions.

In my experience, when you dig into passage-level data, you almost always discover that a small number of passages are doing the majority of the citation work. I once analyzed a client's citation data and found that a single 150-word paragraph on their pricing methodology page drove 70 percent of their total citations across all queries. The rest of their 50-page content library contributed almost nothing.

That insight changes everything about where you invest optimization effort.

Method 1: Scroll-to-Text Fragment Analysis

When AI platforms cite a source, they often append scroll-to-text fragments to the citation URL. These take the format: #:~:text=specific%20quoted%20text%20here

By parsing these fragments from citation URLs, you can identify the exact text on your page that the AI is referencing. This is the most direct form of passage-level tracking available [5].

Implementation steps:

- Capture all citation URLs from AI responses for your domain

- Parse URLs for the

#:~:text=fragment parameter - Decode the URL-encoded text to identify the cited passage

- Map each citation back to the specific section or paragraph on your page

- Track which passages get cited most frequently

Method 2: Semantic Alignment Scoring

When scroll-to-text fragments are not available (not all platforms use them consistently), semantic alignment scoring provides an alternative.

The process:

- Break your content pages into atomic passages (individual paragraphs or sections)

- Embed each passage using a text embedding model (such as text-embedding-3-large)

- Embed the relevant segments of the AI-generated answer

- Compute cosine similarity between each of your passages and the answer segments

- The passage with the highest cosine similarity above a threshold (typically 0.75 or higher) is the likely cited passage

Research suggests that passages with cosine similarity above 0.85 to AI answer segments have significantly higher citation probability than those below 0.70 [12].

Method 3: Multi-Citation Detection

Some responses cite the same domain multiple times. Tracking multi-citation responses reveals which pages have depth that the AI engine finds valuable across multiple claims in a single answer.

If your domain is cited 3 times in a single ChatGPT response, each citation likely references a different passage. Mapping all three gives you a complete picture of which content segments the AI finds most attributable.

Method 4: Cross-Platform Consistency Mapping

Track which specific passages are cited across different platforms. If the same passage on your site gets cited by ChatGPT, Perplexity, and Google AI Overviews, that passage has strong cross-platform alignment. It is structurally sound for AI citation.

Passages that only get cited on one platform may have platform-specific alignment (matching that platform's retrieval preferences) but lack universal citation strength. This is explored in depth in our AI Share of Voice guide, where cross-platform citation patterns inform SOV calculations.

[EXPERIMENT CANDIDATE] Build a passage-level citation heat map for 50 content pages across 4 platforms. Identify "universal citation passages" that appear across all platforms versus "platform-specific passages" that only appear on one.

Q8. How Do You Build a Citation Monitoring Workflow? [toc=Monitoring Workflow]

A citation monitoring workflow combines manual spot-checks with automated API-based sampling across tracked queries. For statistical validity, execute each tracked query at least 30 times per platform per measurement period, capture binary citation presence along with passage-level alignment and stability trends, and set automated alerts for displacement events and stability drops.

The Workflow I Wish I Had Started With

When we first started tracking AI citations for clients at MaximusLabs, our process was embarrassingly manual. I would open ChatGPT, type a query, check if the client was cited, and write it down in a spreadsheet. Repeat for Perplexity. Repeat for Google AI Overviews. Multiply by 50 queries.

It took about a month before I realized three things:

- The manual process was too slow to catch displacement events

- Single-run checks were statistically meaningless

- We needed a system, not a checklist

Here is the workflow I would build if I were starting from scratch today.

Phase 1: Query Set Selection

Start with 30 to 50 queries that represent your highest-value topics. These should include:

- Money queries: Queries that directly relate to your product or service category

- Authority queries: Queries where being cited positions you as a domain expert

- Competitive queries: Queries where your competitors are currently cited

Do not try to track hundreds of queries at the start. Depth of tracking on fewer queries produces better intelligence than shallow tracking across many queries.

Phase 2: Platform Coverage Matrix

Not every query needs to be tracked on every platform. Build a coverage matrix:

Prioritize ChatGPT, Google AI Overviews, and Perplexity. These three platforms cover the majority of AI search traffic and have distinct citation behaviors worth tracking separately [13].

Phase 3: Sampling Cadence

- Weekly full runs: Execute your complete query set with N = 30 runs per query per platform. This is your primary measurement event.

- Daily spot-checks: Run your top 10 money queries once per day on each primary platform. This catches acute displacement events between weekly runs.

- Monthly deep analysis: Aggregate weekly data into stability indices, displacement reports, and passage utilization maps.

Phase 4: Data Capture Template

For each query execution, capture the following fields:

Phase 5: Escalation Triggers

Set automated alerts for:

- Displacement alert: Your citation disappears from a money query after being stable for 2+ weeks

- Competitor entry alert: A new competitor domain appears in the citation set for a tracked query

- Stability drop alert: 7-day stability index drops below 40 percent for any tracked query

- Suppression alert: A query that previously produced cited responses begins producing uncited responses

[IMPROVED] Phase 6: Displacement Investigation Protocol

When an escalation trigger fires, here is how to investigate. I will walk through a real example pattern we have seen.

Scenario: Your Monday morning alert shows that your citation for "best practices for B2B SaaS onboarding" disappeared from ChatGPT after 3 weeks of stable presence.

Step 1: Confirm the displacement (15 minutes) Run the query 10 times on ChatGPT. If your citation appears in fewer than 2 of those runs, the displacement is confirmed. Record which domains are now cited.

Step 2: Identify the displacing content (30 minutes) Check the new citation URLs. Visit them. Did a competitor recently publish or update content on this topic? Check their publication dates.

Step 3: Analyze the pairwise match (30 minutes) Compare the specific passage the competitor is having cited against your own target passage. Is theirs more recent? More specific? Does it contain data points yours lacks? Does it more directly answer the claim in the AI-generated response?

Step 4: Decide on response (15 minutes) Three options:

- Strengthen: Update your passage to be more directly aligned with the AI-generated claim. Add fresher data, more specific examples, clearer assertions.

- Expand: Create a new passage that targets the specific claim angle the competitor is winning on.

- Accept: If the competitor's passage is genuinely better for this specific query, shift your tracking focus to adjacent queries where you can win.

This investigation takes about 90 minutes total. It is not something you automate. It requires strategic judgment about whether and how to respond. The automated system catches the event. The human decides what to do about it.

Phase 7: Workflow Automation Tiers

For automation, you have three tiers of investment:

Tier 1: Manual + Spreadsheet (Free, time-intensive) Manually query platforms and log results. Works for up to 20 queries. Unsustainable beyond that.

Tier 2: API-Based Scripting (Moderate cost, scalable) Use OpenAI, Perplexity, and Gemini APIs to programmatically execute queries and parse citation data. Build a simple script that runs on a cron schedule and writes to a database.

Tier 3: Platform Subscription (Higher cost, turnkey) Use dedicated GEO tracking platforms that handle query execution, citation parsing, stability scoring, and alerting automatically.

The right tier depends on your query volume, budget, and technical capability. I have seen effective programs at all three levels.

What I'm Thinking About Next

The citation tracking discipline is still in its infancy. We are building the measurement playbook in real time, and I expect the methodologies I have described here to evolve significantly over the next 12 to 18 months.

What keeps me thinking is the gap between what we can measure and what actually matters. We can track citation presence, stability, and passage alignment. But we cannot yet measure the full influence of our content on AI-generated answers when citations are suppressed. That dark influence zone is the next frontier.

I also think we are heading toward a world where AI platforms provide native citation analytics. Microsoft Copilot already shows web search query transparency [16]. It is only a matter of time before Google and OpenAI offer publisher-facing dashboards showing how content is used in AI answers.

Until then, the forensic approach I have outlined here, combining patent knowledge with statistical sampling and passage-level tracking, is the most reliable way to understand your citation reality. If you are building a GEO measurement practice, our comprehensive GEO Measurement framework covers the full picture beyond citations.

Frequently Asked Questions

What is citation tracking in AI search? Citation tracking monitors when and why AI platforms like ChatGPT, Google AI Overviews, and Perplexity reference your content as a source in their generated answers, using repeated sampling to account for probabilistic variance.

How often do AI search citations change? Monthly citation drift ranges from 40.5% on Perplexity to 59.3% on Google AI Overviews, meaning roughly half of all citations are different each month. Weekly monitoring is the minimum viable cadence.

How do LLMs decide which sources to cite? LLMs follow a citation insertion pipeline: they identify verifiable claims, search for semantically matching passages, evaluate alignment against a grounding quality threshold, and insert citations when confidence is sufficient.

What is the difference between content-first and generate-first citation pathways? Content-first retrieves source documents before generating the answer. Generate-first writes the answer from training knowledge first, then searches for documents to verify claims. Both pathways can produce citations for your content.

Why do some AI answers have no citations at all? Citation suppression occurs when the model has high parametric confidence, when no retrieved passage meets the alignment threshold, or when the query type (conversational, opinion-seeking) does not trigger the citation verification pipeline.

What is a good citation stability index? Above 60% stability over a 30-day window indicates strong, reliable citation presence. Between 30-60% indicates moderate volatility. Below 30% signals opportunistic citations that require content optimization.

How many times should I sample each query for reliable citation tracking? A minimum of 30 sampling runs per query per platform per measurement period is needed for statistical significance, based on the observed 40-60% monthly drift rates and the ALCE benchmark's findings on citation inconsistency.

What is pairwise passage ranking in the context of AI citations? Google's patent describes LLMs comparing your passage head-to-head against a competing passage via reasoning prompts. The model picks the more relevant passage, meaning your citation can disappear when a competitor publishes better-aligned content.

How do I detect when a competitor displaces my citation? Monitor competing citation URLs alongside your own for each tracked query. When your citation disappears and a new competitor URL appears in the same response, displacement has occurred. Track timing and frequency to identify patterns.

Can I track which specific paragraph on my page gets cited by AI? Yes. Parse scroll-to-text fragments from citation URLs to identify exact quoted text, or use semantic alignment scoring (cosine similarity) between your content passages and AI answer segments to identify the cited passage.

References

[1] SearchAtlas, "Why Do AI Search Results Keep Changing?" Measured between June and July 2025: Google AI Overviews 59.3% citation drift, ChatGPT 54.1%, Perplexity 40.5%. https://searchatlas.com/blog/why-do-ai-search-results-keep-changing/

[2] Gao, T., Yen, H., Yu, J., Chen, D., "Enabling Large Language Models to Generate Text with Citations," EMNLP 2023. Established ALCE benchmark showing best models lack complete citation support 50% of the time. https://aclanthology.org/2023.emnlp-main.398/

[3] Google LLC, US Patent US11886828B1, "Generative Summaries for Search Results." Describes content-first and generate-first citation pathways with verification loop. https://patents.google.com/patent/US11886828B1/

[4] Google LLC, US Patent US20250156456A1, "Large Language Model Adaptation for Grounding." Introduces grounding quality metric for quantifying attribution quality. https://patents.google.com/patent/US20250156456A1/

[5] W3C, Text Fragments specification. Scroll-to-text fragment format: #:~:text= for linking to specific text passages. https://wicg.github.io/scroll-to-text-fragment/

[6] Kuhn, L., Gal, Y., Farquhar, S., "Semantic Uncertainty: Linguistic Invariances for Uncertainty Estimation in Natural Language Generation," ICLR 2023. Demonstrates how model uncertainty drives output variability. https://arxiv.org/abs/2302.09664

[7] Google LLC, US Patent US20250124067A1, "Method for Text Ranking with Pairwise Ranking Prompting." Describes head-to-head passage comparison via LLM reasoning. https://patents.google.com/patent/US20250124067A1/

[8] Google LLC, US Patent US12437016B2, "Fine-tuning Large Language Models using Reinforcement Learning with Search Engine Feedback." Models trained to prefer verifiable, search-grounded responses. https://patents.google.com/patent/US12437016B2/

[9] Google LLC, US Patent US20240289407A1, "Search with Stateful Chat." AI Mode pipeline with personalization embeddings conditioning downstream processing. https://patents.google.com/patent/US20240289407A1/

[10] Kuhn, L., Gal, Y., Farquhar, S., "Semantic Uncertainty," ICLR 2023. Semantic entropy measures uncertainty across sampled responses. https://arxiv.org/abs/2302.09664

[11] DADM: "Hallucination Detection in LLMs via Distance-Aware Distribution Modeling," ICLR 2026 submission. Uses normalizing flows to distinguish truthful from hallucinated outputs. [URL NEEDED]

[12] Embedding similarity and citation probability research. Passages with cosine similarity above 0.85 have significantly higher citation probability. [URL NEEDED]

[13] Qwairy, "Perplexity vs ChatGPT: AI Citation Study Q3 2025." 118K answers analyzed. Perplexity: 21.87 citations per question avg. ChatGPT: 7.92 citations per question avg. [URL NEEDED - verify at qwairy.com]

[14] Aggarwal et al., "GEO: Generative Engine Optimization," KDD 2024. Citing sources in content yields 30-40% visibility improvement. https://dl.acm.org/doi/10.1145/3637528.3671900

[15] The Digital Bloom, "2025 AI Visibility Report: How LLMs Choose What Sources to Mention." Brand search volume strongest predictor (0.334 correlation) of LLM citation inclusion. [URL NEEDED - verify at thedigitalbloom.com]

[16] Microsoft, "Introducing Copilot Search in Bing," April 2025. Inline sentence-level citations with web search query transparency. [URL NEEDED - verify at blogs.bing.com]

.png)