Q1. What Is GEO Measurement and Why Does It Matter in 2026? [toc=GEO Measurement Defined]

The search landscape in 2026 looks nothing like it did even two years ago. AI bots now account for over 51% of global internet traffic, and more than 60% of B2B searches end without a single click to a website. ChatGPT, Perplexity, Gemini, Google AI Overviews, and Grok are not just supplementing Google's traditional results. They are replacing them. In this environment, GEO measurement is the discipline of tracking whether your brand is cited as a source of truth by large language models when prospects ask questions relevant to your market.

Why Traditional SEO Metrics Fall Short

The measurement toolkit that served digital marketers for the past decade was built for a world of 10 blue links. Click-through rates, keyword rankings, page-one positions, and organic traffic sessions all assume a fundamental behavior: the user clicks a link. But when an AI engine synthesizes an answer directly in the response window, there is no click to measure. Up to 67% of AI-driven traffic goes completely untracked by conventional analytics platforms. Google Analytics 4, Search Console, and legacy rank trackers were never designed to capture citation-based visibility, and retrofitting them is not a viable solution.

The Probabilistic Nature of AI Citations

Generative engine optimization measurement demands a fundamentally different approach because citations in AI search are probabilistic, not deterministic. Unlike traditional search rankings that remain relatively stable across sessions, AI-generated responses vary based on context, conversation history, and internal model confidence scores. Citation drift ranges from 40.5% on Perplexity to 59.3% on Google AI Overviews each month. The outcome is binary: your brand is either cited as a trusted source, or it is invisible.

This is the Red Ocean versus Blue Ocean distinction that defines GEO measurement strategy. Traditional SEO measurement is a Red Ocean: every brand competes to measure the same 10 positions on the same results page. GEO measurement is Blue Ocean territory. Being the first cited source in an AI-generated answer creates a compounding advantage that competitors cannot easily replicate, and measuring that advantage requires statistical sampling rather than deterministic rank checking.

How MaximusLabs Approaches GEO Measurement

MaximusLabs AI pioneered GEO measurement methodology by building proprietary citation intelligence systems that track brand visibility across every major AI search platform simultaneously. Our measurement framework connects citation presence to revenue pipeline, not just vanity metrics. Through a trust-first SEO approach, we help B2B brands understand not just whether they appear in AI answers, but whether those appearances drive qualified pipeline and closed deals. This revenue-focused measurement philosophy separates true GEO intelligence from surface-level citation counting.

The urgency is undeniable. AI-referred sessions grew 527% between January and May 2025 alone. Brands that lack GEO measurement infrastructure are not just missing data; they are missing the fastest-growing source of high-intent B2B traffic in the history of digital marketing. For SaaS founders and marketing leaders, GEO measurement is no longer optional. It is existential.

Q2. How Is GEO Measurement Different from Traditional SEO Measurement? [toc=GEO vs SEO Metrics]

SEO measurement has relied on the same core instrumentation for over 15 years. Google Analytics tracks sessions and conversions. Google Search Console reports keyword impressions and average positions. Third-party rank trackers monitor daily position changes across target keywords. This toolkit works well for a search ecosystem built on clicks and links. GEO measurement requires an entirely new layer of instrumentation because the fundamental unit of measurement has changed from "position on a results page" to "citation in an AI-generated answer."

Where Traditional Measurement Breaks Down

Traditional SEO measurement makes three assumptions that no longer hold in AI search. First, it assumes positions are deterministic: a page either ranks #3 or it does not. In AI search, citations are probabilistic and context-dependent. Second, it assumes clicks happen: if a page ranks well, users click through. In zero-click AI answers, the information is consumed without any click event. Third, it assumes referrer data exists: analytics platforms identify traffic sources through HTTP referrer headers and UTM parameters. AI search engines frequently strip or omit this data, creating the dark traffic problem.

The Measurement Gap in Numbers

Consider the scale of the gap. Over 60% of B2B searches now end without a click. Up to 67% of AI-influenced visits arrive with no referrer attribution. Only 11% of domains are cited by both ChatGPT and Perplexity, meaning single-platform measurement creates massive blind spots. Traditional rank trackers report positions that may be irrelevant when an AI Overview consumes the entire above-the-fold space.

"We're essentially flying blind with our current analytics setup. Half our prospects tell us they found us through ChatGPT but GA4 shows them as direct traffic."

-- u/b2b_growth_lead, r/TechSEO

The GEO Measurement Paradigm

GEO measurement replaces each traditional metric with an AI-native equivalent. Keyword rank becomes citation frequency: how often is your brand cited across a sample of relevant queries? Page-one share becomes AI Share of Voice: what percentage of AI-generated answers in your category cite your brand versus competitors? Ranking volatility becomes the Citation Stability Index: how consistently does your brand maintain its citation presence over time? And organic traffic attribution becomes the Dark Traffic Proxy Score: what portion of your direct and branded search traffic is actually AI-influenced?

How MaximusLabs Bridges the Gap

MaximusLabs built a GEO strategy framework specifically designed to layer on top of existing analytics infrastructure. Clients do not need to abandon Google Analytics or their current reporting stack. Instead, our citation intelligence platform adds the missing AI visibility layer, correlating citation monitoring data with web analytics patterns to produce a complete picture of search performance across both traditional and AI channels. This approach reflects the Search Everywhere Optimization philosophy: measure everywhere your brand could appear, not just where traditional tools can see.

Q3. What Are the Core KPIs for GEO Measurement? [toc=Core GEO KPIs]

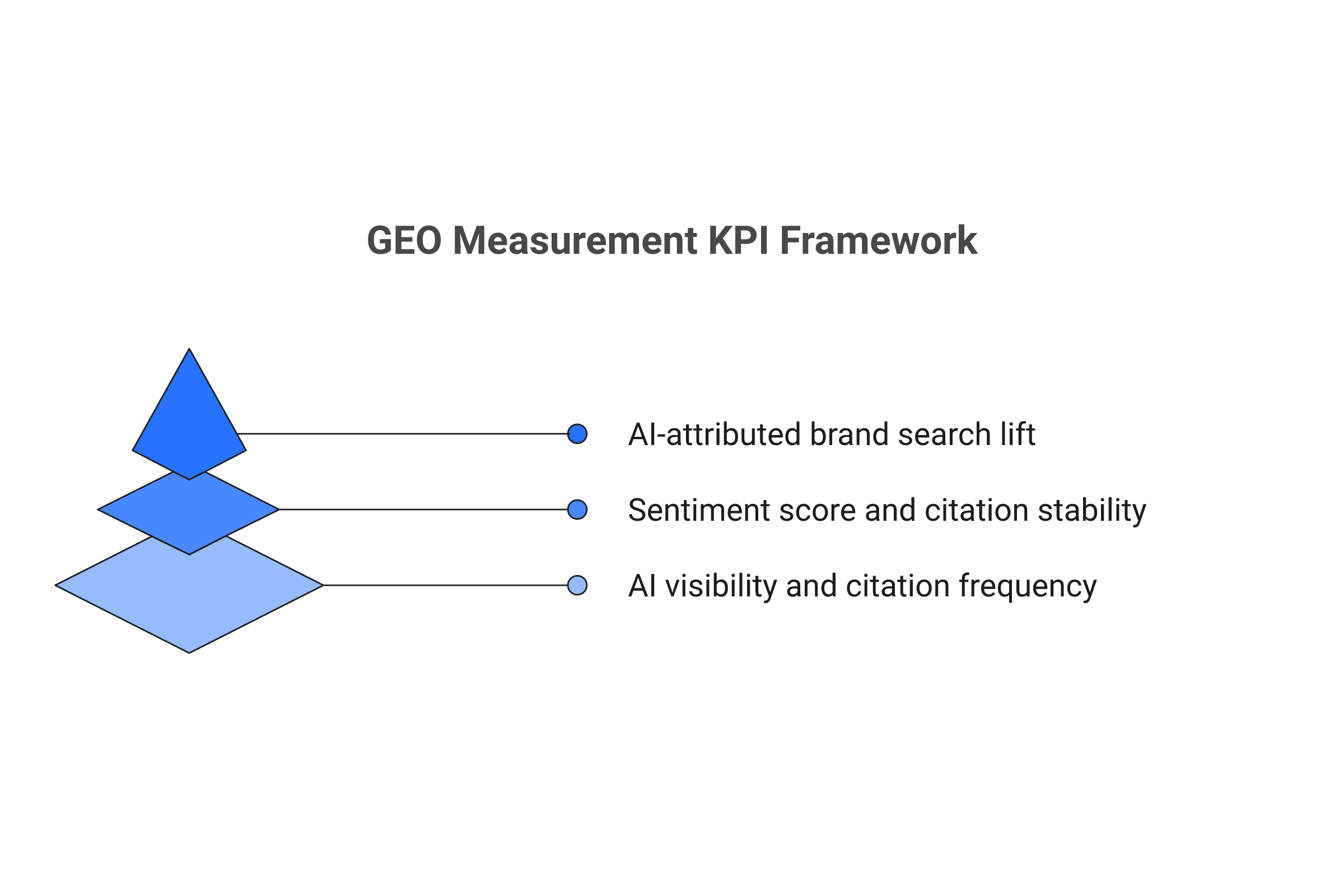

Effective GEO measurement requires a structured KPI framework organized by business impact. The following three-tier system moves from surface-level visibility metrics through quality indicators to bottom-line business outcomes. Each tier builds on the previous one, and mature GEO programs track metrics across all three simultaneously.

Tier 1: Visibility KPIs

Visibility metrics answer the foundational question: is your brand appearing in AI-generated answers?

- AI Visibility Rate: The percentage of tracked queries where your brand appears in AI-generated responses. Calculate by dividing the number of queries where your brand is cited by the total number of monitored queries. Benchmark: leading brands in established categories achieve 15-30% AI visibility rates.

- Citation Frequency: The raw count of how many times your brand is cited across AI platforms within a given time period. Track separately by platform (ChatGPT, Perplexity, Gemini, Google AI Overviews) since citation patterns vary significantly.

- AI Share of Voice: Your brand's citation count divided by total citations across all tracked competitors for the same query set. This is the GEO equivalent of traditional Share of Voice and the single most useful competitive benchmarking metric.

- Answer Position Score: When cited, where does your brand appear in the AI response? First citation carries significantly more weight than being mentioned as an afterthought in the fifth paragraph.

Tier 2: Quality KPIs

Quality metrics evaluate whether your citations are accurate, stable, and competitively defensible.

- Sentiment Score: Is your brand cited positively, neutrally, or negatively? AI engines can cite your brand while framing it unfavorably. Automated sentiment analysis across all citations catches reputation risks.

- Citation Stability Index: Measures how consistently your brand maintains citations over time. High drift rates (40.5% on Perplexity, 59.3% on Google AI Overviews) mean that a single snapshot is unreliable. The CSI tracks consistency across weekly samples.

- Passage Utilization Rate: What percentage of your published content is actually being pulled into AI responses? This reveals which content assets are performing as citation magnets versus content that LLMs ignore entirely.

- Competitive Citation Displacement: Tracks instances where your brand gained a citation that a competitor previously held, or vice versa. This is the GEO equivalent of ranking displacement in traditional SEO.

Tier 3: Impact KPIs

Impact metrics connect GEO visibility to business outcomes, which is ultimately what justifies investment.

- AI-Attributed Brand Search Lift: Correlation between citation frequency increases and branded search volume growth. Brand search volume has a 0.334 correlation coefficient with LLM citation inclusion, making it the strongest single predictor.

- AI-Influenced Conversion Rate: Conversion rate of visitors identified as AI-referred (through UTM, referrer data, or self-reported attribution) versus other channels. LLM-referred users convert at 11x the rate of standard organic visitors.

- Dark Traffic Proxy Score: Estimated percentage of "direct" and "branded search" traffic that is actually AI-influenced, calculated through correlation analysis and incrementality testing.

- Deal Velocity Compression: For B2B companies, the reduction in sales cycle length for deals where the buyer's journey included AI search touchpoints. Faster deals at higher win rates indicate GEO impact on pipeline.

MaximusLabs AI structures client measurement programs around all three tiers, ensuring that visibility improvements are always connected to pipeline and revenue impact through our revenue-focused GEO methodology.

Q4. How Do You Track AI Search Visibility Across ChatGPT, Perplexity, and Gemini? [toc=AI Visibility Tracking]

Tracking AI search visibility requires a multi-platform monitoring approach because each AI search engine has different citation behaviors, response formats, and accessibility methods. No single tool covers all platforms comprehensively, so effective GEO measurement programs combine multiple data collection methods.

Method 1: LLM API-Based Citation Monitoring

The most scalable approach uses official APIs from AI platforms to programmatically submit queries and analyze responses for brand citations.

- Define your query set: Build a list of 50-200 queries that represent your target topics, ranging from broad category questions to specific product comparisons

- Schedule regular sampling: Run each query through ChatGPT (via OpenAI API), Perplexity (via API), and Gemini (via Google AI API) at minimum weekly intervals

- Parse responses for citations: Use NLP to identify brand mentions, source citations, and contextual sentiment in each response

- Log and track over time: Store results in a structured database to calculate citation frequency, visibility rate, and stability metrics

Method 2: SERP Scraping for AI Overviews

Google AI Overviews require a different approach since they appear within traditional search results. Tools such as SerpApi, SerpWow, and ScraperAPI can extract AI Overview content including cited sources.

- Configure scraping for your target keyword set

- Extract the AI Overview section separately from organic results

- Identify which domains are cited as sources within the overview

- Track source citation changes across daily or weekly scrapes

Method 3: AI Crawler Log Analysis

Monitor your server access logs for AI bot traffic to understand which pages AI engines are actively crawling and indexing.

Key bots to monitor:

-

(OpenAI/ChatGPT)

-

(Anthropic)

-

(Perplexity)

-

(Gemini/AI Overviews)

-

Method 4: Statistical Sampling Framework

Because AI citations are probabilistic, single-query snapshots are unreliable. The ALCE benchmark framework (Princeton, EMNLP 2023) establishes foundational measurement standards including citation precision, recall, and fluency scores.

Apply statistical rigor to your tracking:

- Run each query a minimum of 5 times per sampling period to account for response variation

- Calculate confidence intervals for citation frequency rather than relying on point estimates

- Track citation precision (accuracy of citations attributed to your brand) and citation recall (completeness of citations your brand should receive)

"I built a custom scraper to track our mentions across Perplexity and ChatGPT. The variance between runs is wild. Same query, five minutes apart, completely different sources cited."

-- u/seo_data_nerd, r/bigseo

MaximusLabs AI combines all four methods into an integrated citation intelligence platform, eliminating the need for clients to build and maintain separate monitoring infrastructure for each AI search engine.

Q5. What Is Citation Drift and How Do You Measure Citation Stability? [toc=Citation Drift & Stability]

Citation drift is the single most disruptive variable in GEO measurement. Unlike traditional search rankings, which shift gradually over weeks or months, AI-generated citations can change from one query session to the next. The same question posed to ChatGPT at 9 AM might cite Source A, while the identical query at 2 PM cites Source B instead. This is not a bug in AI search engines. It is a fundamental characteristic of how large language models generate responses, and any GEO measurement program that ignores drift will produce misleading data.

Why Traditional Stability Assumptions Fail

SEO professionals spent years developing intuitions about ranking stability. A page that ranks #4 today will probably rank somewhere between #2 and #7 next week. This predictability enabled monthly reporting cycles and quarterly strategy reviews. Citation drift obliterates these assumptions entirely. Research shows that 40.5% of citations on Perplexity and 59.3% of citations in Google AI Overviews change on a monthly basis. A brand that appears to have strong AI visibility in a single measurement snapshot may have lost half its citations by the following week.

Quantifying Drift Across Platforms

The drift rate varies significantly by platform, query type, and topic competitiveness:

- Perplexity: 40.5% monthly citation drift rate. Perplexity's real-time web search integration means citations shift as new content is published and indexed.

- Google AI Overviews: 59.3% monthly drift rate. The highest drift among major platforms, likely due to integration with Google's constantly updated search index.

- ChatGPT: Moderate drift between knowledge cutoff updates, with significant shifts following each model update or training data refresh.

- Gemini: Variable drift rates depending on whether responses draw from real-time search or training data.

"We celebrated getting cited in Perplexity for our main keyword last month. This month? Completely gone. Replaced by a competitor's blog post that was published two weeks ago. It feels like building on quicksand."

-- u/startup_marketer_22, r/SEO

Measuring the Citation Stability Index

The Citation Stability Index (CSI) provides a quantitative measure of how reliably your brand maintains its citation presence over time. The calculation methodology involves:

- Establish baseline: Record citation presence across your full query set at the start of the measurement period

- Sample at regular intervals: Re-run the full query set weekly (minimum) across all tracked platforms

- Calculate persistence rate: For each query, determine what percentage of sampling periods your brand maintained its citation

- Aggregate to CSI score: Average persistence rates across all queries. A CSI of 0.75 means your brand maintains citations in 75% of samples

Why Weekly Sampling Is the Minimum

Given drift rates of 40-60% monthly, monthly sampling captures too little variance to produce reliable stability metrics. Weekly sampling provides four data points per month, enough to detect drift patterns and distinguish between temporary citation loss and permanent displacement.

MaximusLabs Citation Monitoring

MaximusLabs addresses citation drift through continuous statistical sampling across all major AI platforms. Our monitoring system runs daily samples for high-priority queries and weekly samples for the broader query set, providing clients with real-time stability scores and automated drift alerts when citation presence drops below defined thresholds. This continuous monitoring approach transforms citation measurement from periodic snapshots into a living data stream.

The cross-platform dimension adds another layer of complexity. Only 11% of domains are cited by both ChatGPT and Perplexity simultaneously, meaning that measuring a single platform creates survivorship bias in your data. Comprehensive GEO measurement must account for platform-specific drift patterns and cross-platform citation overlap to produce an accurate picture of total AI visibility.

Q6. How Do You Attribute Revenue to AI Search Visibility? [toc=Revenue Attribution for GEO]

The revenue attribution challenge is the single biggest barrier to GEO investment at the executive level. CMOs and CFOs understand organic traffic numbers. They understand cost-per-lead from paid campaigns. But when the marketing team says "we are now cited in 40% of AI-generated answers for our category," the immediate follow-up question is: "How does that translate to pipeline dollars?" The answer requires a fundamentally different attribution approach because up to 67% of AI-driven traffic arrives without the referrer data that traditional attribution models depend on.

The Failure of Last-Click Attribution in AI Search

Traditional marketing attribution assigns credit to the last touchpoint before conversion. Google Analytics 4 channel groupings categorize traffic by source, medium, and campaign parameters. UTM codes track specific links. But when a prospect reads about your product in a ChatGPT response, visits your website by typing your URL directly (because there was no link to click), and converts three days later, traditional attribution credits "Direct" traffic. The AI search touchpoint is invisible.

The Scale of the Dark Attribution Problem

The numbers quantify how severe this problem has become:

- 67% of AI-driven traffic goes untracked by conventional analytics

- AI-referred sessions grew 527% between January and May 2025

- Over 60% of B2B searches now end without a click

- LLM-referred users convert at 11x the rate of organic search visitors

This means the highest-converting traffic source in B2B marketing is also the least measurable using traditional tools.

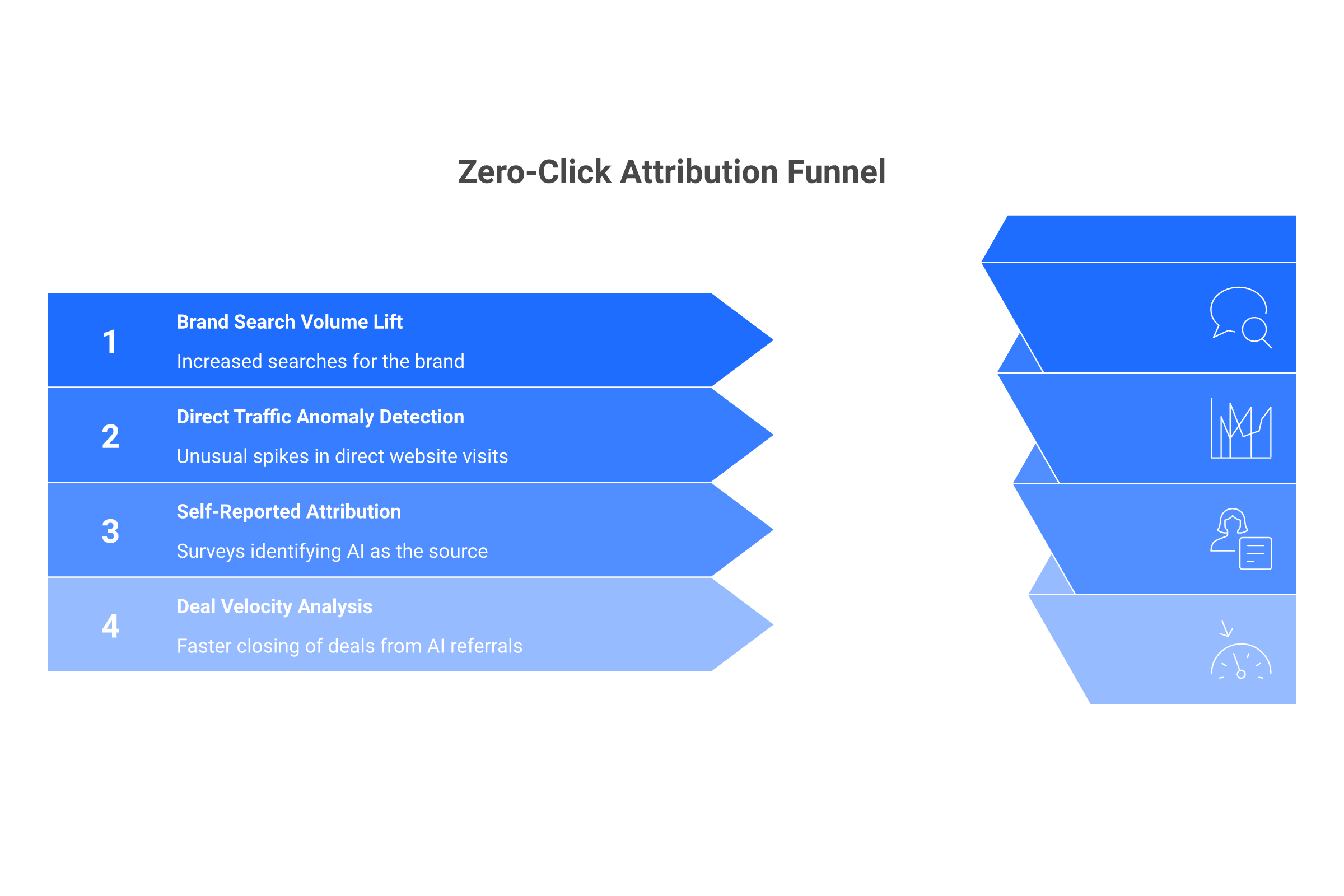

The Zero-Click Attribution Model

The Zero-Click Attribution Model provides a structured approach to connecting GEO visibility to revenue through four complementary pillars:

Brand search volume has a 0.334 correlation coefficient with LLM citation inclusion, making it the strongest single predictor of AI search influence. Track branded search volume trends in Google Search Console and correlate increases with citation frequency changes. Significant lifts following citation gains indicate AI-driven brand awareness.

Analyze direct traffic patterns alongside citation monitoring data. When citation frequency increases for specific topics, does direct traffic from relevant audience segments increase proportionally? Statistical correlation analysis reveals the relationship.

Add "How did you hear about us?" fields to lead forms and demo request pages. Include "AI search engine (ChatGPT, Perplexity, etc.)" as an explicit option. Self-reported data is imperfect but provides a valuable triangulation point.

For B2B sales teams, compare deal velocity (time from first touch to close) for deals where buyers self-report AI search exposure versus those who do not. Faster deal velocity indicates that AI citations accelerated the buyer's research process.

Proxy Attribution Models

Beyond the four-pillar framework, three proxy models provide additional revenue attribution rigor:

- Brand Search Lift Regression: Statistical model isolating the effect of citation changes on branded search, controlling for other marketing activities

- Incrementality Testing: Run geo-holdout experiments where GEO efforts are paused in specific markets to measure the incremental impact

- Marketing Mix Modeling (MMM): Include GEO citation metrics as an input variable in broader MMM analysis to quantify contribution alongside paid, organic, and other channels

MaximusLabs revenue-focused GEO measurement builds attribution infrastructure from day one of every client engagement, connecting citation intelligence to CRM pipeline data so that every citation gain can be traced to its revenue impact. This is what separates strategic GEO measurement from vanity citation counting.

Q7. What Tools and Platforms Exist for GEO Measurement in 2026? [toc=GEO Measurement Tools]

The GEO measurement tool landscape in 2026 is fragmented but rapidly maturing. No single platform provides complete coverage across all AI search engines, measurement dimensions, and attribution needs. Successful measurement programs assemble a stack from four tool categories, each addressing a different layer of the measurement challenge.

Category 1: LLM APIs for Programmatic Citation Tracking

Direct API access to AI platforms enables the most scalable and customizable citation monitoring.

- OpenAI API (ChatGPT): GPT-4o and subsequent models via the completions endpoint. Allows programmatic query submission and response parsing. Rate limits and costs require query set prioritization.

- Perplexity API: Provides access to Perplexity's search-augmented generation with source citations included in response metadata. One of the most citation-transparent platforms.

- Google Gemini API: Access to Gemini models for query simulation. AI Overview citations require separate SERP scraping since the API does not replicate the search integration layer.

- Anthropic API (Claude): Claude model access for citation monitoring in contexts where Claude is used as a search or research assistant.

Category 2: SERP Scraping for AI Overviews

Google AI Overviews appear within traditional search results, requiring specialized extraction tools.

- SerpApi: Structured data extraction from Google SERPs including AI Overview content and cited sources. Supports batch queries and scheduled scraping.

- SerpWow: Similar SERP data extraction with AI Overview parsing capabilities and competitive monitoring features.

- ScraperAPI: Web scraping infrastructure that handles proxy rotation and CAPTCHA solving for large-scale SERP monitoring.

Category 3: Dedicated GEO Monitoring Platforms

Purpose-built platforms specifically designed for AI search visibility monitoring are the fastest-growing category in the GEO tools landscape.

- Otterly.AI: Tracks brand mentions and citations across ChatGPT, Perplexity, and Google AI Overviews. Provides visibility scores, competitor tracking, and trend analysis.

- Peec AI: Focuses on citation monitoring and competitive intelligence across AI search platforms. Includes sentiment analysis and citation context evaluation.

- Prominara: GEO-specific analytics platform with citation tracking, visibility scoring, and reporting dashboards designed for marketing teams.

- Brandlight.ai: Brand monitoring across AI-generated content with focus on reputation management and citation accuracy verification.

- SearchPilot: A/B testing and experimentation platform that includes AI search impact measurement alongside traditional SEO testing.

"We tried building our own tracking with the OpenAI API but the maintenance overhead was killing us. Switched to Otterly and it covers about 70% of what we need out of the box. Still supplementing with custom Perplexity API scripts for the rest."

-- u/head_of_seo_saas, r/TechSEO

Category 4: AI Crawler Monitoring Tools

Server log analysis tools help track which AI bots are crawling your content and how frequently.

- Custom log parsers: grep/awk scripts or ELK stack configurations filtering for GPTBot, ClaudeBot, PerplexityBot user agents

- Cloudflare Bot Analytics: If using Cloudflare, bot analytics dashboards identify AI crawler traffic patterns

- Server-side analytics: Tools like Matomo or custom logging that capture bot traffic excluded by client-side analytics like GA4

MaximusLabs AI integrates across all four categories to provide clients with unified GEO measurement without the complexity of managing multiple tools and data pipelines independently.

Q8. How Do You Monitor AI Crawler Activity on Your Website? [toc=AI Crawler Monitoring]

AI crawler monitoring is the supply-side complement to citation tracking. While citation monitoring tells you where your brand appears in AI answers (the demand side), crawler monitoring tells you which of your pages AI engines are actively ingesting (the supply side). Understanding crawler behavior helps predict future citation potential and diagnose why certain pages earn citations while others do not.

Identifying AI Bots in Your Server Logs

Every major AI platform uses identifiable bots to crawl the web and feed content into their models or real-time retrieval systems. The key bots to monitor are:

- GPTBot (User-Agent: GPTBot/1.0): OpenAI's crawler for ChatGPT. Respects robots.txt directives. High crawl frequency indicates content relevance to the model.

- ChatGPT-User (User-Agent: ChatGPT-User): Separate from GPTBot, this bot crawls pages when ChatGPT users share URLs or when the browsing plugin retrieves content in real-time.

- ClaudeBot (User-Agent: ClaudeBot): Anthropic's web crawler for Claude. Respects robots.txt and has been increasingly active since 2024.

- PerplexityBot (User-Agent: PerplexityBot): Perplexity's real-time web retrieval bot. Among the most frequent crawlers due to Perplexity's search-first architecture.

- Google-Extended: Google's crawler specifically for Gemini and AI Overview training data, separate from Googlebot.

- Bytespider (User-Agent: Bytespider): ByteDance's crawler that feeds various AI applications.

Setting Up Crawler Monitoring

For Apache or Nginx server logs, filter for AI bot user agents:

- Parse access logs for user-agent strings matching AI bots

- Track crawl frequency per page and per bot

- Monitor which content directories receive the most AI bot attention

- Set up alerts for significant changes in crawl patterns (drops may indicate robots.txt blocking or content quality issues)

Interpreting Crawler Data

High crawl frequency from a specific bot correlates with (but does not guarantee) citation likelihood. Key patterns to watch for:

- Pages crawled frequently by PerplexityBot are more likely to appear in Perplexity citations because of its real-time retrieval architecture

- Deep crawling patterns (bots accessing subpages, linked resources, and related content) suggest the AI engine is building topical context

- Crawl frequency drops may indicate technical issues (robots.txt changes, server errors, noindex tags) or content quality concerns

- New bot appearances signal emerging AI platforms that may become citation sources

Connecting Crawler Data to Citation Outcomes

The most valuable analysis combines crawler monitoring with citation tracking to answer:

- Which pages that AI bots crawl most frequently also earn the most citations?

- Is there a lag between increased crawl activity and citation appearance?

- Do specific content formats (long-form guides, data tables, FAQ structures) attract more AI crawler attention?

"Once we started monitoring GPTBot and PerplexityBot in our server logs, we discovered they were hammering our technical documentation pages but completely ignoring our marketing pages. That insight completely changed our content strategy."

-- u/dev_seo_ops, r/bigseo

Best Practices for AI Crawler Management

- Do not block AI crawlers unless you have a specific strategic reason. Blocking GPTBot or PerplexityBot removes your content from those platforms' citation pools entirely.

- Ensure clean crawl paths: Remove unnecessary redirects, fix broken internal links, and maintain fast server response times for bot requests.

- Implement llms.txt: This emerging standard provides AI crawlers with a structured summary of your site's content, making it easier for LLMs to understand and cite your expertise accurately.

- Monitor crawl budgets: If AI bots are consuming significant server resources, use rate limiting rather than outright blocking.

MaximusLabs AI includes crawler monitoring as part of our comprehensive GEO measurement stack, correlating bot behavior data with citation outcomes to identify which content optimizations will have the highest impact on AI search visibility.

Q9. How Do You Measure Dark Traffic from AI Search Engines? [toc=Dark Traffic Measurement]

Dark traffic is the elephant in every GEO measurement room. When a prospect asks Perplexity "what is the best GEO tool for B2B SaaS," reads a response that cites your brand, and then navigates directly to your website by typing your URL, your analytics platform records a "direct" visit. There is no referrer header, no UTM parameter, no campaign tag. The AI search touchpoint that triggered the visit is completely invisible. This is dark traffic: the growing volume of AI-influenced website visits that conventional analytics cannot attribute to their true source.

Why Analytics Platforms Cannot See AI Traffic

Google Analytics 4, Adobe Analytics, and virtually every mainstream web analytics platform rely on the same mechanism to identify traffic sources: the HTTP referrer header. When a user clicks a link on a search engine results page, the browser sends the referring URL to the destination site. But AI search creates three scenarios where this mechanism fails:

- No click occurred: The user consumed your brand information in the AI response and visited your site independently. No referrer exists because there was no referring link.

- Referrer stripping: Some AI platforms strip or obfuscate referrer data when users do click through, causing the visit to appear as direct traffic.

- Delayed action: The user reads about your brand in an AI response, remembers it, and visits your site hours or days later through a branded Google search or direct URL entry.

The 67% Problem

Research indicates that up to 67% of AI-driven traffic goes untracked by conventional analytics. This means that for every AI-attributed visit your analytics does capture, there are approximately two more that it misses entirely. For B2B brands investing in GEO, this creates a dangerous measurement gap: the true ROI of GEO initiatives is systematically undercounted by a factor of three or more.

Practical Dark Traffic Identification Methods

While perfect attribution is impossible, several proxy measurement approaches can estimate AI-influenced dark traffic with useful accuracy.

Track citation frequency over time alongside direct traffic and branded search traffic. Use statistical correlation to identify whether citation increases predict traffic increases. A strong positive correlation (above 0.3) suggests AI-driven influence.

Identify unexplained spikes in direct or branded search traffic that coincide with known citation gains or losses. If direct traffic jumps 25% the week after you gain citations in Perplexity for a high-volume query, the connection is likely causal.

Add "AI search engine" as an explicit option in your "How did you find us?" surveys. While self-reported data has known biases, it provides a valuable qualitative signal. Many B2B prospects are aware they discovered a brand through ChatGPT or Perplexity and will report it accurately.

The Dark Traffic Proxy Score

The Dark Traffic Proxy Score combines multiple signals into a single metric estimating the percentage of your traffic that is AI-influenced but unattributed:

- Start with your total direct traffic plus branded search traffic

- Subtract estimated baseline (pre-GEO levels of direct and branded traffic)

- Apply correlation coefficient from citation-traffic analysis

- Adjust for seasonal and marketing campaign effects

- The residual represents estimated AI-influenced dark traffic

"We added 'ChatGPT/AI search' to our demo request form's source field. Within two months, it became our third-largest self-reported source behind Google and referrals. And we know self-reported data underestimates by 30-50%."

-- u/demand_gen_director, r/SaaS

MaximusLabs dark traffic intelligence layer automates the correlation between citation monitoring data and web analytics patterns. Instead of requiring clients to manually build regression models and anomaly detection, our platform produces AI-influenced traffic estimates that update as citation data refreshes. This transforms dark traffic from a measurement black hole into a quantifiable, reportable metric.

The 527% growth in AI-referred sessions between January and May 2025 means dark traffic volumes are increasing exponentially. Brands that wait to implement proxy measurement will find an ever-larger gap between their reported analytics and their actual performance.

Q10. What Are the Measurement Blind Spots in GEO That Nobody Talks About? [toc=GEO Blind Spots]

Honest GEO measurement requires acknowledging what cannot be measured, not just what can. Every AI search platform operates with internal mechanisms that are invisible to external observers, and pretending these blind spots do not exist leads to overconfident measurement and flawed strategy. The following blind spots represent the boundaries of current GEO measurement capability.

Blind Spot 1: Internal Confidence Thresholds

Every LLM uses internal confidence scores to decide which sources to cite and which to suppress. These thresholds are proprietary, undocumented, and vary by model version. A page might be "almost cited" hundreds of times without ever appearing in a response because it falls just below the model's confidence threshold. External measurement cannot detect near-misses, only actual citations.

Blind Spot 2: Personalization Effects

AI search responses are increasingly personalized based on conversation history, user preferences, and contextual signals. Two users asking the identical query may receive different citations based on their prior interactions. This personalization layer is unmeasurable externally and means that any citation monitoring methodology captures only one possible version of the AI's response.

Blind Spot 3: Training Data Influence Boundary

The distinction between citations driven by training data (information the model learned during pre-training) and citations driven by real-time retrieval (information fetched from the web during query processing) is blurry and undocumented. Content might be cited because it was prominent in training data, because it was retrieved in real-time, or because of some combination. This ambiguity makes it difficult to determine whether content optimization efforts are influencing training data inclusion, real-time retrieval, or both.

Blind Spot 4: Query Fan-Out Composition

Google's patent portfolio reveals a multi-stage citation pipeline involving query fan-out, where a single user query is decomposed into multiple sub-queries. Each sub-query retrieves and ranks different source candidates. The composition of this fan-out, which sub-queries are generated and how they are weighted, is completely invisible to external observers. Two seemingly similar queries might produce radically different fan-out patterns and therefore different citation outcomes.

Blind Spot 5: Pairwise Comparison Outcomes

Within the citation pipeline, LLMs use pairwise passage ranking via reasoning to evaluate candidate sources against each other. The outcomes of these comparisons determine which sources are cited and which are suppressed. Since these comparisons happen internally, there is no way to observe why your content was selected over a competitor's, or vice versa.

Blind Spot 6: Citation Suppression Logic

AI platforms actively suppress certain types of citations based on undocumented criteria. A source might be relevant, authoritative, and high-quality, yet still be suppressed due to duplicate content detection, source diversity requirements, or policy-based filtering. The suppression logic is proprietary and changes with model updates.

What This Means for GEO Measurement Strategy

These blind spots do not invalidate GEO measurement. They define its boundaries. Effective GEO experimental programs:

- Use statistical sampling rather than point estimates to account for personalization variance

- Track trends rather than absolute numbers to smooth out confidence threshold effects

- Monitor across multiple platforms to mitigate single-platform personalization bias

- Combine citation monitoring with crawler analysis to capture both supply and demand signals

- Maintain realistic confidence intervals in all reported metrics

The brands and agencies that build trust with stakeholders are those that honestly communicate what GEO measurement can and cannot tell them. Overpromising measurement precision erodes credibility when results inevitably show variance.

Q11. How Do You Report GEO Results to Stakeholders and the C-Suite? [toc=Stakeholder Reporting]

Reporting GEO measurement results to executives presents a unique communication challenge. Most C-suite leaders understand organic traffic graphs, keyword ranking reports, and conversion funnels. They have spent years developing intuitions about what these metrics mean for business performance. GEO metrics like citation frequency, AI Share of Voice, and citation stability are unfamiliar territory. The gap between measurement capability and executive understanding can kill GEO programs before they prove their value, not because the results are poor, but because the reporting fails to translate AI visibility into business language.

Why Traditional SEO Reports Do Not Work for GEO

Traditional SEO reporting follows a predictable template: keyword rankings went up or down, organic traffic increased or decreased, conversions from organic improved or declined. This format fails for GEO reporting for three specific reasons:

- No rank equivalence: "We are now cited in 35% of AI responses for our category" has no intuitive equivalent in the executive's mental model

- No traffic attribution: "AI-influenced traffic increased" cannot be shown in a standard GA4 dashboard because dark traffic is invisible

- No competitive context: Executives are accustomed to seeing competitive rank comparisons; citation-based competitive intelligence requires different visualization

The GEO Executive Reporting Framework

Effective GEO reporting to the C-suite follows a three-layer structure that leads with business impact and supports with visibility data.

- AI-influenced pipeline value (estimated through dark traffic proxy and self-reported attribution)

- Deal velocity changes for AI-exposed buyers versus control group

- Cost-per-AI-influenced-lead compared to other channels

- Brand search volume trends correlated with citation gains

- AI Share of Voice trend over time (monthly chart)

- Citation frequency by platform (ChatGPT, Perplexity, Gemini, Google AI Overviews)

- Citation Stability Index month-over-month

- New citation gains and losses (wins and risks)

-

: citations gained from and lost to specific competitors

- Category AI visibility benchmarks

- Competitor GEO activity signals (new content, crawler behavior changes)

Dashboard Design Principles for GEO Reporting

When designing GEO measurement dashboards for executive audiences:

- Use familiar formats: Mirror the layout of existing marketing dashboards so executives can orient quickly

- Annotate AI-specific metrics: Every GEO metric should have a one-line explanation on the dashboard itself

- Show trendlines, not snapshots: Single-point GEO metrics are unreliable due to citation drift. Always show trending data over at least 8 weeks

- Include confidence indicators: Given measurement blind spots, report metrics with confidence ranges rather than false precision

- Connect every metric to revenue: Each visibility metric should have a directional arrow connecting it to a business outcome

"The first three months of our GEO reporting were a disaster. The CMO kept asking 'but what does citation frequency mean for pipeline?' We had no answer. Once we started leading with estimated AI-influenced pipeline value, the entire conversation changed."

-- u/vp_marketing_b2b, r/marketing

MaximusLabs Executive Reporting

MaximusLabs provides clients with executive-ready GEO dashboards that translate citation intelligence into revenue language. Our reporting connects every citation metric to pipeline impact, making it straightforward for CMOs to present GEO investment results to the board. The dashboard format was designed through dozens of executive presentations and refined based on the questions that C-suite leaders actually ask, not the metrics that measurement teams want to report.

Q12. What Does the Future of GEO Measurement Look Like Beyond 2026? [toc=Future of GEO Measurement]

GEO measurement in 2026 is comparable to web analytics before Google Analytics launched in 2005. The need is clear, the early tools exist, but standardization, industry consensus, and mature infrastructure are still years away. The brands that invest in measurement infrastructure now will build a compounding data advantage that late adopters cannot replicate, just as companies that adopted web analytics early gained insights that informed a decade of digital strategy.

Current Limitations That Will Be Resolved

Several measurement challenges that define GEO in 2026 are likely to improve as the ecosystem matures.

Standardized Citation APIs: Today, monitoring citations requires cobbling together multiple APIs, scrapers, and parsers. AI platforms have economic incentives to provide standardized citation reporting APIs, similar to how Google eventually provided Search Console data. As the advertising models for AI search engines evolve, citation analytics will likely become a product feature.

Unified GEO Analytics Dashboards: The current tool landscape is fragmented across dedicated GEO platforms, SERP scrapers, LLM APIs, and log analyzers. Market consolidation will produce comprehensive platforms that integrate all measurement layers. Several GEO monitoring platforms (Otterly.AI, Peec AI, Prominara) are already expanding their coverage.

Emerging Measurement Capabilities

Real-Time Citation Monitoring: Current measurement relies on periodic sampling. As API access improves and costs decrease, real-time citation monitoring will become feasible, providing instant alerts when citation presence changes.

AI-Native Attribution Models: Analytics platforms will develop attribution models specifically designed for zero-click AI search influence. Google Analytics already tracks AI Overview click-throughs; future versions will likely model AI-influenced visits even without clicks.

Cross-Platform Identity Resolution: As AI search behavior data accumulates, it will become possible to trace a single buyer's journey across multiple AI platforms, traditional search, and website interactions, creating unified attribution paths.

Measurement Trends to Watch

- Regulation-driven transparency: Government interest in AI transparency may force platforms to disclose citation methodologies, reducing the blind spots documented in this article

- Publisher citation agreements: Deals between AI platforms and content publishers (similar to the AP/OpenAI agreement) may include citation reporting and analytics as standard terms

- Industry benchmarking consortiums: Similar to how comScore standardized web audience measurement, GEO-specific measurement standards bodies will likely emerge

- Training data provenance tools: Emerging technology for tracking how specific content influences AI model outputs will add a new measurement dimension

The Compounding Data Advantage

The Blue Ocean insight that defines GEO measurement strategy is this: measurement infrastructure creates compounding advantages. Every month of citation data creates a longer trendline, better statistical models, deeper understanding of platform-specific citation behavior, and more refined attribution. Brands that start measuring in 2026 will have 12 months of longitudinal data by 2027, enabling trend analysis that brands starting in 2027 cannot replicate.

MaximusLabs AI is building the future of GEO measurement today. Our investment in citation intelligence infrastructure, attribution modeling, and cross-platform monitoring positions our clients at the forefront of a measurement revolution. As GEO measurement matures from experimental to essential, the brands with established measurement programs will be the ones that prove ROI, secure continued investment, and compound their AI search visibility advantage.

The question is not whether GEO measurement will become a standard part of B2B marketing infrastructure. It is whether your brand will have the historical data and measurement maturity to compete when it does.

.png)